Hi @ZackerayDove

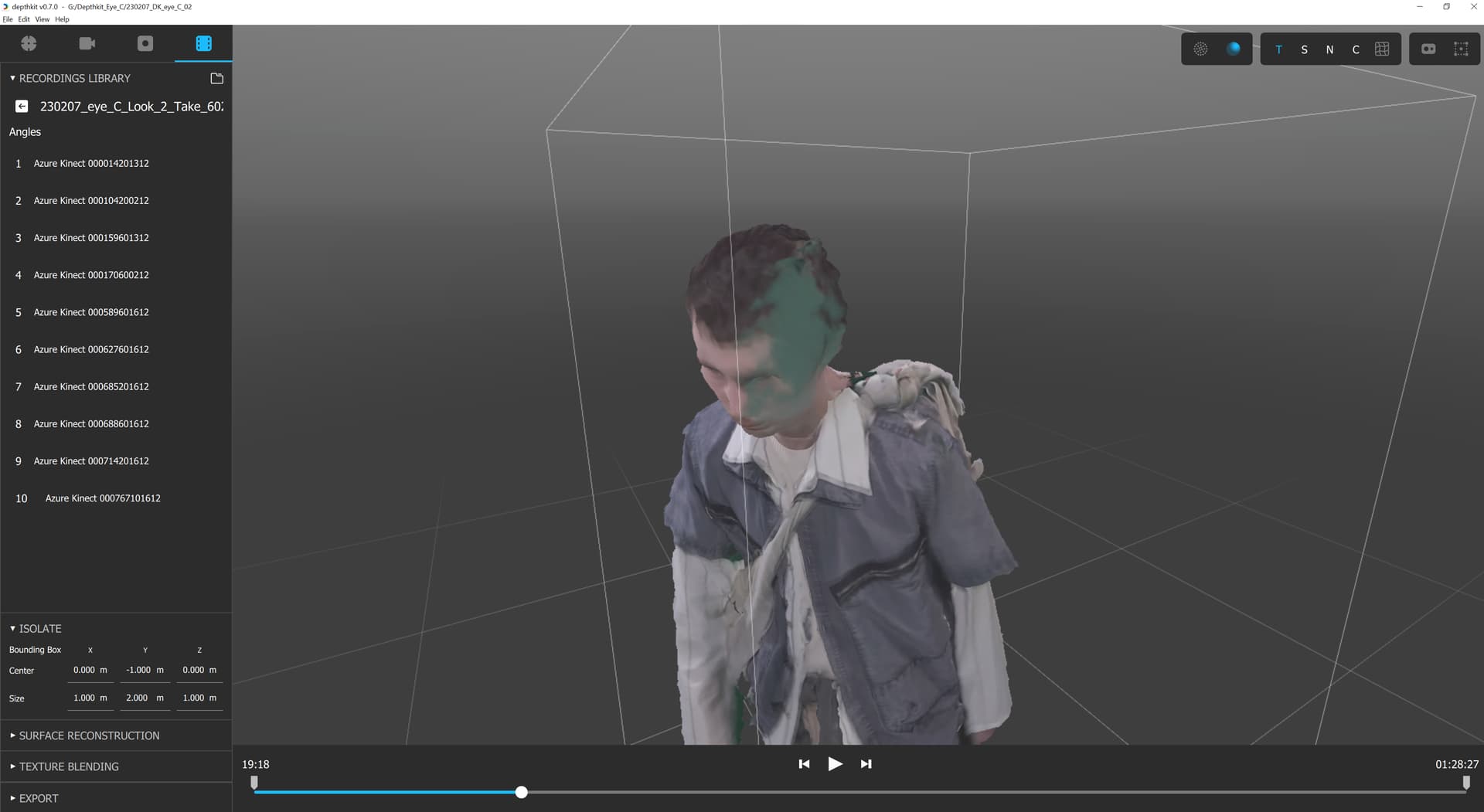

Unfortunately I don’t have a great work around for you just yet. One brutal solution is to remove the offending perspectives from the capture.

We discussed manually cropping the input or output, but that will involve a lot of very precise and error prone frame arithmetic and remapping which is not likely to be workable.

After this latest sprint, we will consider adding crop back to address this specific issue.

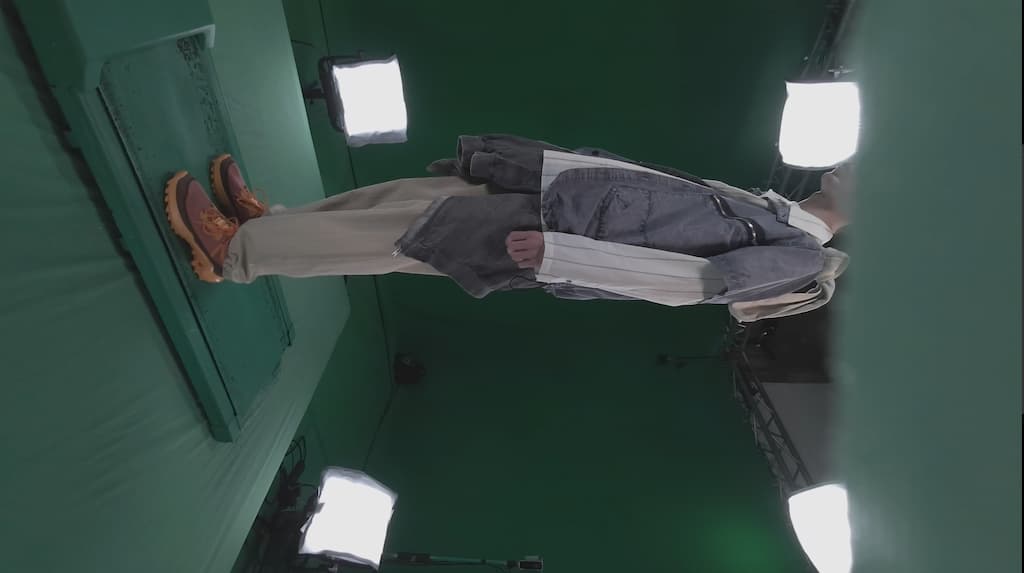

Regarding the lens flares, they are definitely an issue that we are constantly fighting.

We have used lens hoods that don’t occlude the color frames. We also often use ring lights that are small & bright, or softboxes that are higher out of the picture.

Other customers have invested in polarization, which can be expensive but work well.

One team shared the following for polarization:

To achieve this effect we have polarised all the lights in our Cyber Rig to the same plane using filter mounts made for our IDA Tronic flashes. Similarly, large polarization sheets can be purchased and cut to cover most light sizes, however similarly they all need to be aligned to the same plane.

We designed and 3D printed some simple filter mounts which slide over the edge of the Kinect (see attached) so that a polarization filter can be cut and held flat over the RGB camera. The tolerance on this cut polarization sheet needs to be tight as the RGB camera is very close to the depth camera and the filter will clip the IR depth data if it covers the sensor. The polarization sheet needs to be placed into the mount over the RGB cam so that it is at 90 degrees to the environment source lighting, thus cross polarizing the texture data.

Let me know how this all sounds