Hi all,

As I’m sure most of you have seen, Apple has finally unveiled their XR device the Apple Vision Pro.

- We posted a blog about our first impressions here. In short, we’re excited and aligned to Apple’s vision

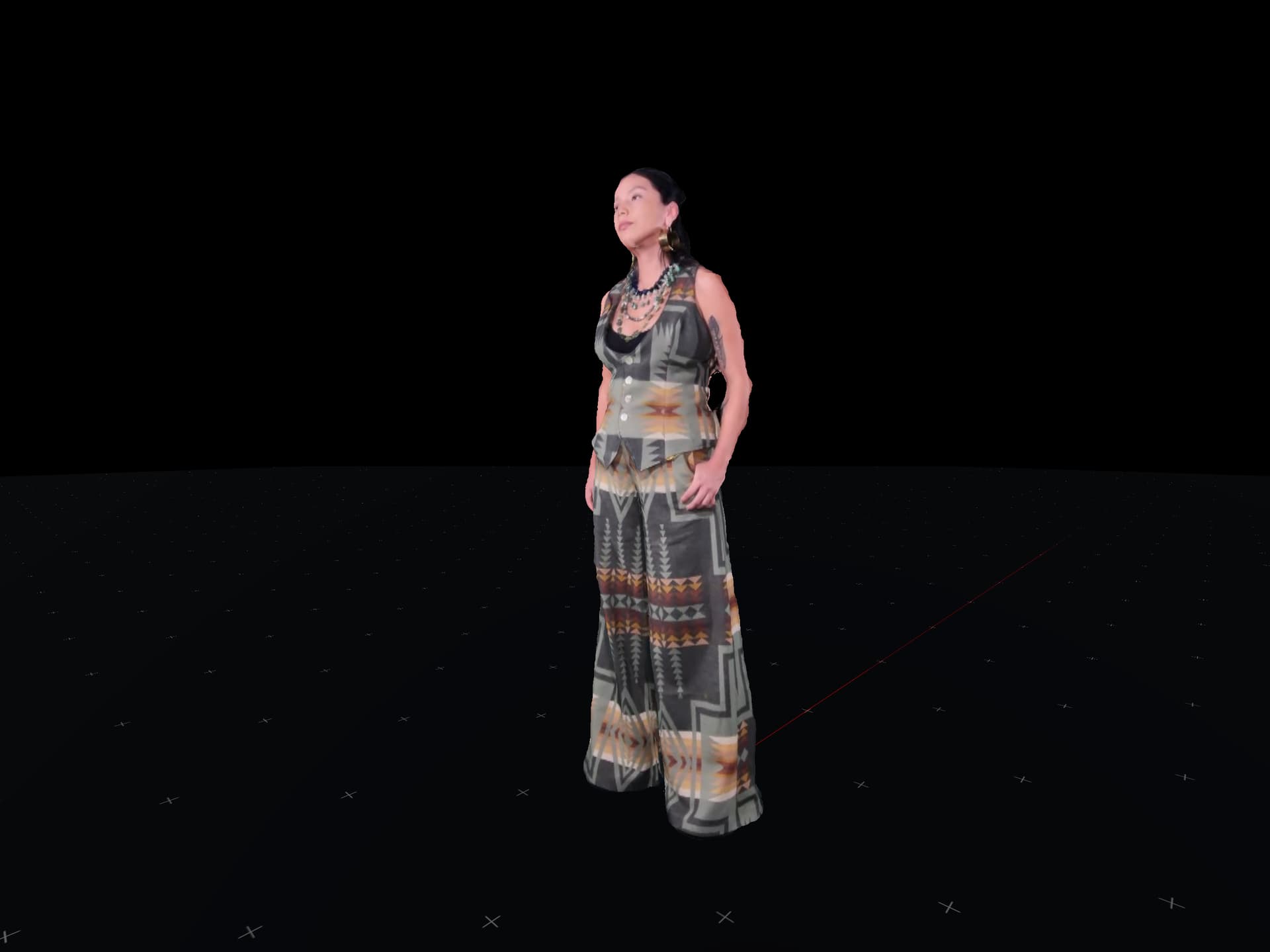

The question is how Depthkit captures will be supported on Vision Pro. There are some considerations here that we are beginning to look at. I’m opening up this thread as a community discussion point. Anyone should feel free to jump in to discuss, ask questions or share their point of view on the Apple Vision Pro (h/t @Andrew @NikitaShokhov 😉

Our intention is to support Depthkit playback on Apple Vision Pro through Unity by or before the headset launches next year. But there is clearly some complexity to how the platform works that we need to work through

Here’s what we see so far:

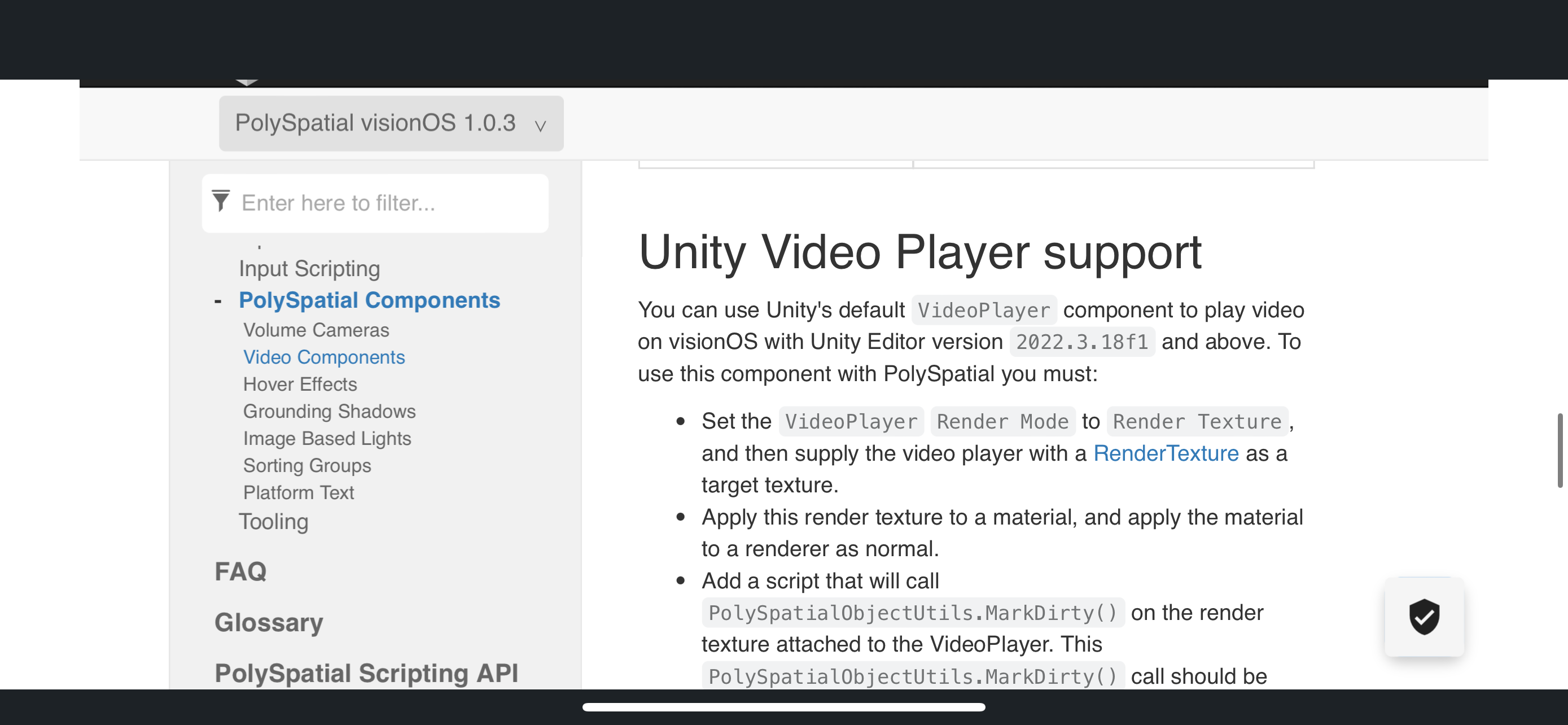

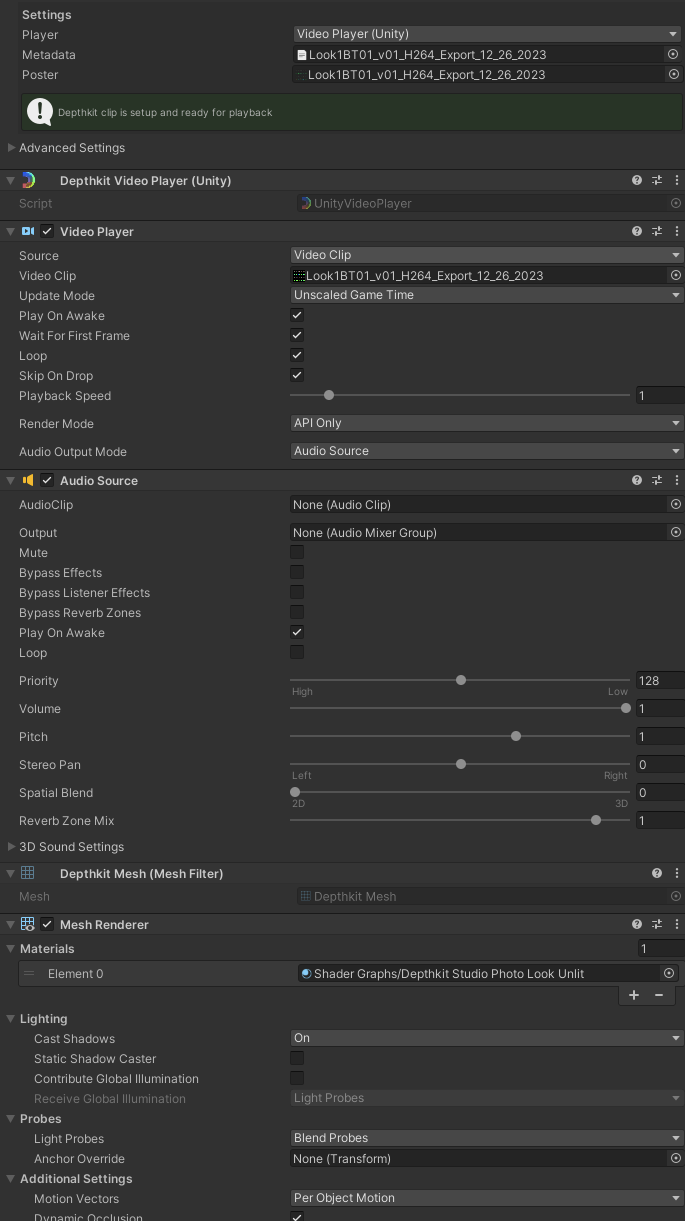

- Unity 2022 is required. Depthkit is currently officially supporting 2020.3, so we will look to upgrade to 2022 for an upcoming phase of Depthkit’s Unity Plug-in release.

- Vision Pro has two rendering modes in Unity. Immersive and Fully Immersive, which have different shader restrictions.

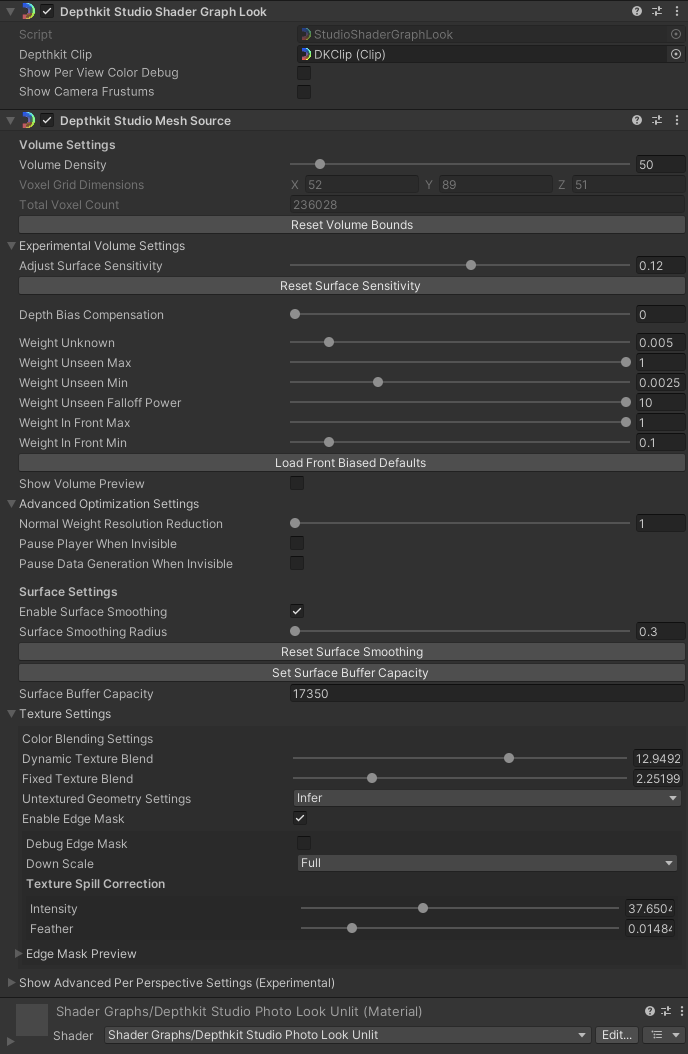

From the first impression on the developer videos & documentation, the Immersive mode will be challenging fit for Depthkit because it does not support “hand written shaders” - which I interpret as nothing outside of the standard ShaderGraph patches will work. While Depthkit uses Shader Graph, we have custom graphs that may not work

On the other hand, Fully Immersive mode seems more promising as it more closely mirrors how Unity has done XR rendering on other platforms. It seems to support the full shader system in URP (and Built-in RP, which Unity says is supported but will not see updates)

We have applied to Unity’s Beta program and will begin testing once we have access to device simulators. Look out for updates here.

If anyone has any questions or perspectives to share, jump in!